TiDB Distributed eXecution Framework (DXF)

TiDB adopts a computing-storage separation architecture with excellent scalability and elasticity. Starting from v7.1.0, TiDB introduces a Distributed eXecution Framework (DXF) to further leverage the resource advantages of the distributed architecture. The goal of the DXF is to implement unified scheduling and distributed execution of tasks, and to provide unified resource management capabilities for both overall and individual tasks, which better meets users' expectations for resource usage.

This document describes the use cases, limitations, usage, and implementation principles of the DXF.

Use cases

In a database management system, in addition to the core transactional processing (TP) and analytical processing (AP) workloads, there are other important tasks, such as DDL operations, IMPORT INTO, TTL, ANALYZE, and Backup/Restore. These tasks need to process a large amount of data in database objects (tables), so they typically have the following characteristics:

- Need to process all data in a schema or a database object (table).

- Might need to be executed periodically, but at a low frequency.

- If the resources are not properly controlled, they are prone to affect TP and AP tasks, lowering the database service quality.

Enabling the DXF can solve the above problems and has the following three advantages:

- The framework provides unified capabilities for high scalability, high availability, and high performance.

- The DXF supports distributed execution of tasks, which can flexibly schedule the available computing resources of the entire TiDB cluster, thereby better utilizing the computing resources in a TiDB cluster.

- The DXF provides unified resource usage and management capabilities for both overall and individual tasks.

Currently, the DXF supports the distributed execution of the ADD INDEX and IMPORT INTO statements.

ADD INDEXis a DDL statement used to create indexes. For example:ALTER TABLE t1 ADD INDEX idx1(c1); CREATE INDEX idx1 ON table t1(c1);IMPORT INTOis used to import data in formats such as CSV, SQL, and Parquet into an empty table.

Limitation

The DXF can only schedule up to 16 tasks (including ADD INDEX tasks and IMPORT INTO tasks) simultaneously.

ADD INDEX limitation

- For each cluster, only one

ADD INDEXtask is allowed for distributed execution at a time. If a newADD INDEXtask is submitted before the currentADD INDEXdistributed task has finished, the newADD INDEXtask is executed through a transaction instead of being scheduled by DXF. - Adding indexes on columns with the

TIMESTAMPdata type through the DXF is not supported, because it might lead to inconsistency between the index and the data.

Prerequisites

Before using the DXF to execute ADD INDEX tasks, you need to enable the Fast Online DDL mode.

Adjust the following system variables related to Fast Online DDL:

tidb_ddl_enable_fast_reorg: used to enable Fast Online DDL mode. It is enabled by default starting from TiDB v6.5.0.tidb_ddl_disk_quota: used to control the maximum quota of local disks that can be used in Fast Online DDL mode.

Adjust the following configuration item related to Fast Online DDL:

temp-dir: specifies the local disk path that can be used in Fast Online DDL mode.

Usage

To enable the DXF, set the value of

tidb_enable_dist_tasktoON:SET GLOBAL tidb_enable_dist_task = ON;When the DXF tasks are running, the statements supported by the framework (such as

ADD INDEXandIMPORT INTO) are executed in a distributed manner. All TiDB nodes run DXF tasks by default.In general, for the following system variables that might affect the distributed execution of DDL tasks, it is recommended that you use their default values:

tidb_ddl_reorg_worker_cnt: use the default value4. The recommended maximum value is16.tidb_ddl_reorg_prioritytidb_ddl_error_count_limittidb_ddl_reorg_batch_size: use the default value. The recommended maximum value is1024.

Starting from v7.4.0, for TiDB Self-Hosted, you can adjust the number of TiDB nodes that perform the DXF tasks according to actual needs. After deploying a TiDB cluster, you can set the instance-level system variable

tidb_service_scopefor each TiDB node in the cluster. Whentidb_service_scopeof a TiDB node is set tobackground, the TiDB node can execute the DXF tasks. Whentidb_service_scopeof a TiDB node is set to the default value "", the TiDB node cannot execute the DXF tasks. Iftidb_service_scopeis not set for any TiDB node in a cluster, the DXF schedules all TiDB nodes to execute tasks by default.

By default, the DXF schedules all TiDB nodes to execute distributed tasks. Starting from v7.4.0, for TiDB Self-Hosted clusters, you can control which TiDB nodes can be scheduled by the DXF to execute distributed tasks by configuring tidb_service_scope.

- For versions from v7.4.0 to v8.0.0, the optional values of

tidb_service_scopeare''orbackground. If the current cluster has TiDB nodes withtidb_service_scope = 'background', the DXF schedules tasks to these nodes for execution. If the current cluster does not have TiDB nodes withtidb_service_scope = 'background', whether due to faults or normal scaling in, the DXF schedules tasks to nodes withtidb_service_scope = ''for execution.

Implementation principles

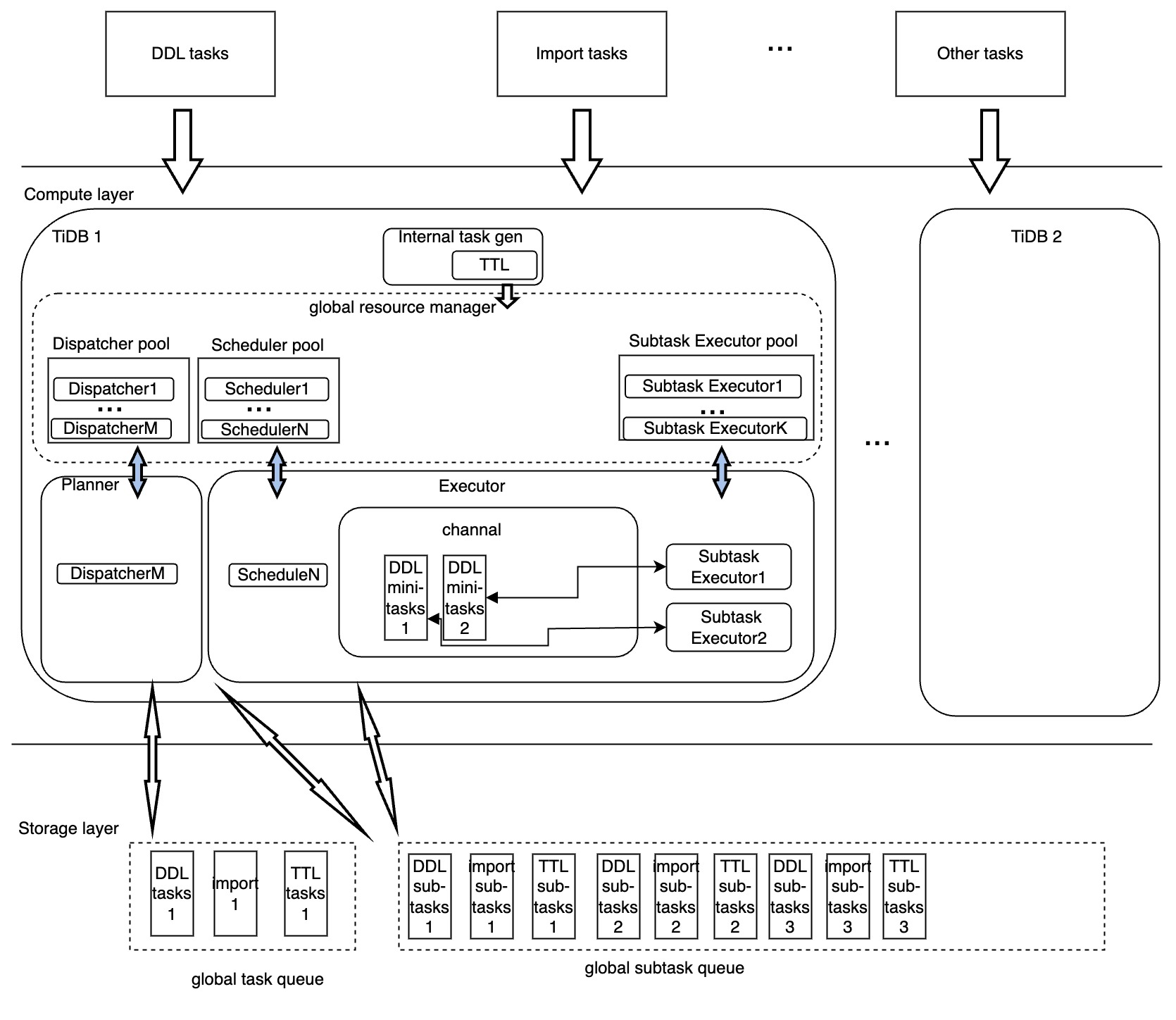

The architecture of the DXF is as follows:

As shown in the preceding diagram, the execution of tasks in the DXF is mainly handled by the following modules:

- Dispatcher: generates the distributed execution plan for each task, manages the execution process, converts the task status, and collects and feeds back the runtime task information.

- Scheduler: replicates the execution of distributed tasks among TiDB nodes to improve the efficiency of task execution.

- Subtask Executor: the actual executor of distributed subtasks. In addition, the Subtask Executor returns the execution status of subtasks to the Scheduler, and the Scheduler updates the execution status of subtasks in a unified manner.

- Resource pool: provides the basis for quantifying resource usage and management by pooling computing resources of the above modules.