Multiple Data Centers in One City Deployment

As a NewSQL database, TiDB combines the best features of the traditional relational database and the scalability of the NoSQL database, and is highly available across data centers (DC). This document introduces the deployment of multiple DCs in one city.

Raft protocol

Raft is a distributed consensus algorithm. Using this algorithm, both PD and TiKV, among components of the TiDB cluster, achieve disaster recovery of data, which is implemented through the following mechanisms:

- The essential role of Raft members is to perform log replication and act as a state machine. Among Raft members, data replication is implemented by replicating logs. Raft members change their own states in different conditions to elect a leader to provide services.

- Raft is a voting system that follows the majority protocol. In a Raft group, if a member gets the majority of votes, its membership changes to leader. In other words, when the majority of nodes remain in the Raft group, a leader can be elected to provide services.

To take advantage of Raft's reliability, the following conditions must be met in a real deployment scenario:

- Use at least three servers in case one server fails.

- Use at least three racks in case one rack fails.

- Use at least three DCs in case one DC fails.

- Deploy TiDB in at least three cities in case data safety issue occurs in one city.

The native Raft protocol does not have a good support for an even number of replicas. Considering the impact of cross-city network latency, three DCs in the same city might be the most suitable solution to a highly available and disaster tolerant Raft deployment.

Three DCs in one city deployment

TiDB clusters can be deployed in three DCs in the same city. In this solution, data replication across the three DCs is implemented using the Raft protocol within the cluster. These three DCs can provide read and write services at the same time. Data consistency is not affected even if one DC fails.

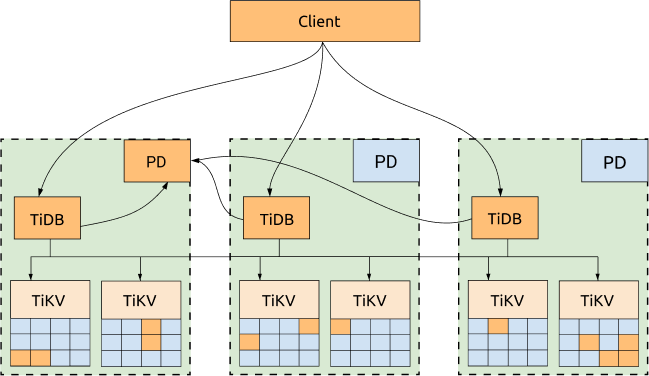

Simple architecture

TiDB, TiKV and PD are distributed among three DCs, which is the most common deployment with the highest availability.

Advantages:

- All replicas are distributed among three DCs, with high availability and disaster recovery capability.

- No data will be lost if one DC is down (RPO = 0).

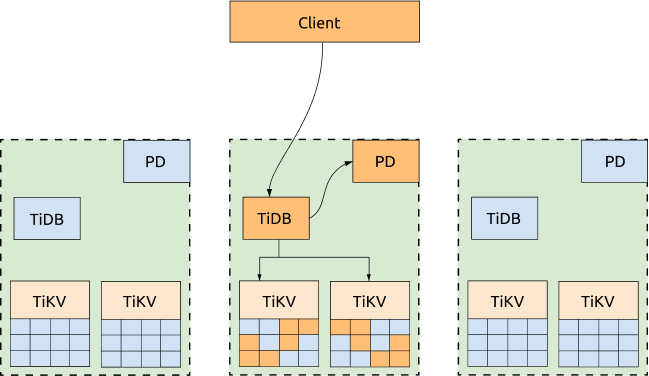

- Even if one DC is down, the other two DCs will automatically start leader election and automatically resume services within a reasonable amount of time (within 20 seconds in most cases). See the following diagram for more information:

Disadvantages:

The performance can be affected by the network latency.

- For writes, all the data has to be replicated to at least 2 DCs. Because TiDB uses 2-phase commit for writes, the write latency is at least twice the latency of the network between two DCs.

- The read performance will also be affected by the network latency if the leader is not in the same DC with the TiDB node that sends the read request.

- Each TiDB transaction needs to obtain TimeStamp Oracle (TSO) from the PD leader. So if the TiDB and PD leaders are not in the same DC, the performance of the transactions will also be affected by the network latency because each transaction with the write request has to obtain TSO twice.

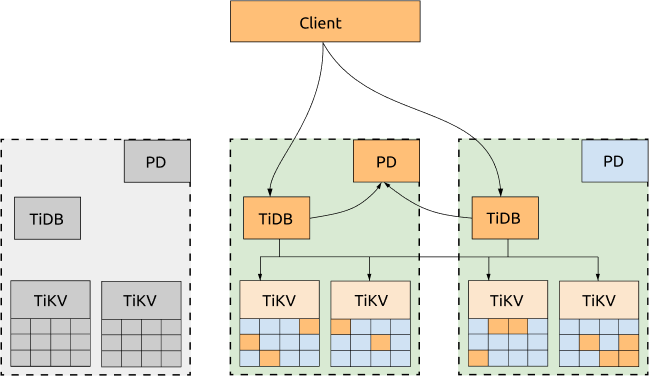

Optimized architecture

If not all of the three DCs need to provide services to the applications, you can dispatch all the requests to one DC and configure the scheduling policy to migrate all the TiKV Region leader and PD leader to the same DC. In this way, neither obtaining TSO nor reading TiKV Regions will be impacted by the network latency across DCs. If this DC is down, the PD leader and TiKV Region leader will be automatically elected in other surviving DCs, and you just need to switch the requests to the DCs that are still alive.

Advantages:

The cluster's read performance and the capability to get TSO are improved. A configuration template of scheduling policy is as follows:

-- Evicts all leaders of other DCs to the DC that provides services to the application.

config set label-property reject-leader LabelName labelValue

-- Migrates PD leaders and sets priority.

member leader transfer pdName1

member leader_priority pdName1 5

member leader_priority pdName2 4

member leader_priority pdName3 3

Disadvantages:

- Write scenarios are still affected by network latency across DCs. This is because Raft follows the majority protocol and all written data must be replicated to at least two DCs.

- The TiDB server that provides services is only in one DC.

- All application traffic is processed by one DC and the performance is limited by the network bandwidth pressure of that DC.

- The capability to get TSO and the read performance are affected by whether the PD server and TiKV server are up in the DC that processes application traffic. If these servers are down, the application is still affected by the cross-center network latency.

Deployment example

This section provides a topology example, and introduces TiKV labels and TiKV labels planning.

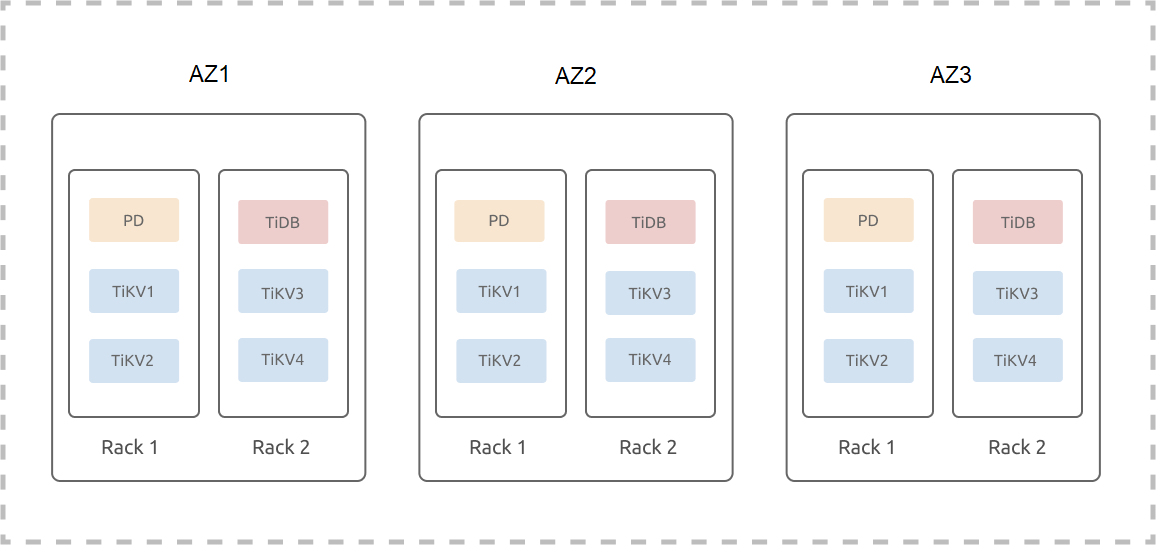

Topology example

The following example assumes that three DCs (IDC1, IDC2, and IDC3) are located in one city; each IDC has two sets of racks and each rack has three servers. The example ignores the hybrid deployment or the scenario where multiple instances are deployed on one machine. The deployment of a TiDB cluster (three replicas) on three DCs in one city is as follows:

TiKV labels

TiKV is a Multi-Raft system where data is divided into Regions and the size of each Region is 96 MB by default. Three replicas of each Region form a Raft group. For a TiDB cluster of three replicas, because the number of Region replicas is independent of the TiKV instance numbers, three replicas of a Region are only scheduled to three TiKV instances. This means that even if the cluster is scaled out to have N TiKV instances, it is still a cluster of three replicas.

Because a Raft group of three replicas tolerates only one replica failure, even if the cluster is scaled out to have N TiKV instances, this cluster still tolerates only one replica failure. Two failed TiKV instances might cause some Regions to lose replicas and the data in this cluster is no longer complete. SQL requests that access data from these Regions will fail. The probability of two simultaneous failures among N TiKV instances is much higher than the probability of two simultaneous failures among three TiKV instances. This means that the more TiKV instances the Multi-Raft system is scaled out to have, the less the availability of the system.

Because of the limitation described above, label is used to describe the location information of TiKV. The label information is refreshed to the TiKV startup configuration file with deployment or rolling upgrade operations. The started TiKV reports its latest label information to PD. Based on the user-registered label name (the label metadata) and the TiKV topology, PD optimally schedules Region replicas and improves the system availability.

TiKV labels planning example

To improve the availability and disaster recovery of the system, you need to design and plan TiKV labels according to your existing physical resources and the disaster recovery capability. You also need to configure in the cluster initialization configuration file according to the planned topology:

server_configs:

pd:

replication.location-labels: ["zone","dc","rack","host"]

tikv_servers:

- host: 10.63.10.30

config:

server.labels: { zone: "z1", dc: "d1", rack: "r1", host: "30" }

- host: 10.63.10.31

config:

server.labels: { zone: "z1", dc: "d1", rack: "r1", host: "31" }

- host: 10.63.10.32

config:

server.labels: { zone: "z1", dc: "d1", rack: "r2", host: "32" }

- host: 10.63.10.33

config:

server.labels: { zone: "z1", dc: "d1", rack: "r2", host: "33" }

- host: 10.63.10.34

config:

server.labels: { zone: "z2", dc: "d1", rack: "r1", host: "34" }

- host: 10.63.10.35

config:

server.labels: { zone: "z2", dc: "d1", rack: "r1", host: "35" }

- host: 10.63.10.36

config:

server.labels: { zone: "z2", dc: "d1", rack: "r2", host: "36" }

- host: 10.63.10.37

config:

server.labels: { zone: "z2", dc: "d1", rack: "r2", host: "37" }

- host: 10.63.10.38

config:

server.labels: { zone: "z3", dc: "d1", rack: "r1", host: "38" }

- host: 10.63.10.39

config:

server.labels: { zone: "z3", dc: "d1", rack: "r1", host: "39" }

- host: 10.63.10.40

config:

server.labels: { zone: "z3", dc: "d1", rack: "r2", host: "40" }

- host: 10.63.10.41

config:

server.labels: { zone: "z3", dc: "d1", rack: "r2", host: "41" }

In the example above, zone is the logical availability zone layer that controls the isolation of replicas (three replicas in the example cluster).

Considering that the DC might be scaled out in the future, the three-layer label structure (dc, rack, host) is not directly adopted. Assuming that d2, d3, and d4 are to be scaled out, you only need to scale out the DCs in the corresponding availability zone and scale out the racks in the corresponding DC.

If this three-layer label structure is directly adopted, after scaling out a DC, you might need to apply new labels and the data in TiKV needs to be rebalanced.

High availability and disaster recovery analysis

The multiple DCs in one city deployment can guarantee that if one DC fails, the cluster can automatically recover services without manual intervention. Data consistency is also guaranteed. Note that scheduling policies are used to optimize performance, but when failure occurs, these policies prioritize availability over performance.